Can You Write the Ship?

Introduction

Most teams won’t fail because the data is wrong—they’ll fail because they don’t know what to do with it.

Can you write the ship?

AI is everywhere now. It’s in your systems, your documentation, your investigations. It’s fast. It’s helpful. It produces something that looks right—almost every time.

And that’s exactly the problem.

Because most teams aren’t asking whether the output is correct.

They’re asking whether it looks correct.

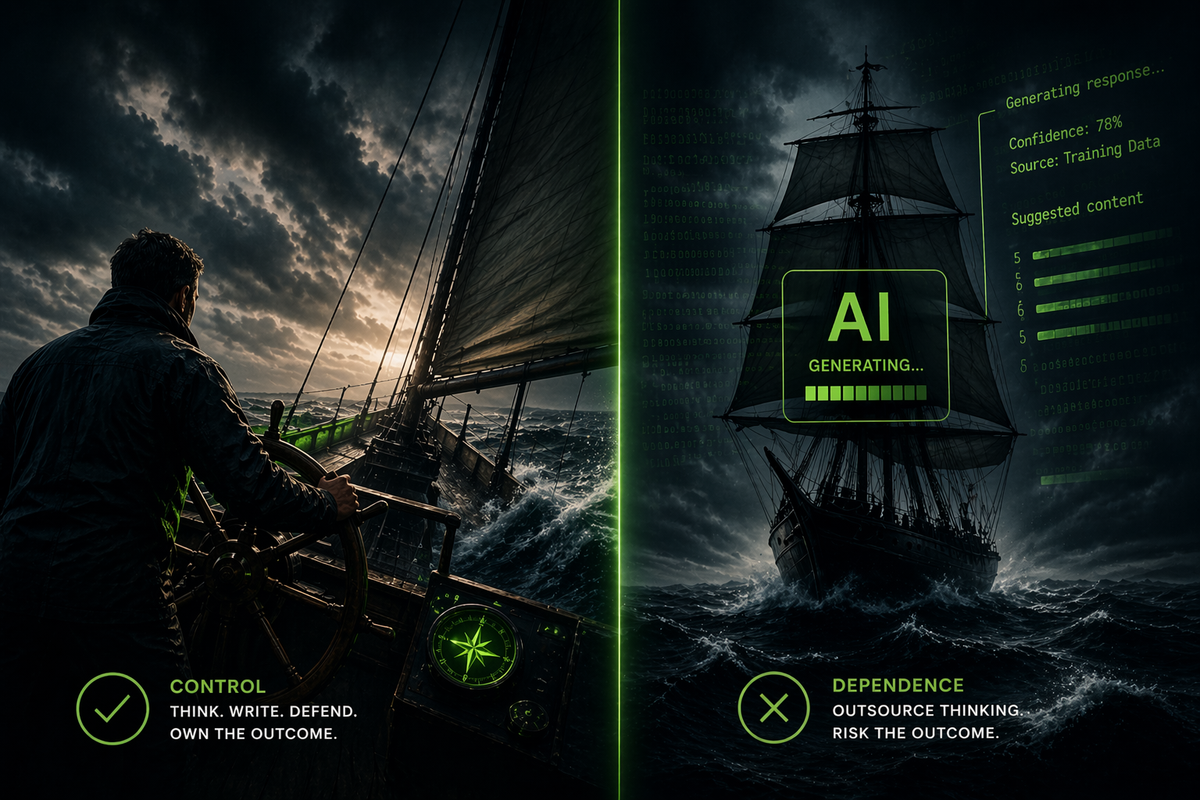

Right the Ship vs. Write the Ship

You’ve heard the phrase “Right the Ship.”

When a vessel lists too far—when it starts to lose stability—the crew corrects it.

They bring it back under control.

That’s execution recovery.

But AI introduces a different failure mode.

Not instability.

Dependence.

So the real question isn’t whether you can right the ship.

It’s whether you can still write it.

The Part No One Is Saying Out Loud

In our white paper on AI in GxP environments, we called out hallucination.

AI can produce something that looks structured, logical, and complete… and still be wrong.

The industry response?

“Keep a human in the loop.”

That sounds safe.

Until you ask a harder question:

What happens when the human in the loop can’t do the work without the AI anymore?

This Is Not a Technology Problem

This is a capability problem.

And it’s already happening.

- Investigations are being “assisted” instead of authored

- Responses are being “refined” instead of constructed

- Thinking is being “accelerated” instead of developed

At first, nothing breaks.

Everything actually looks better.

Cleaner.

Faster.

More consistent.

Until it doesn’t hold.

A Scenario You’ll Recognize

A deviation hits.

It’s not trivial. There’s product impact. There’s timeline pressure. QA is involved. Leadership is asking for answers.

An AI-assisted draft is generated.

It reads well.

Root cause is stated. Actions are listed. It feels complete.

It moves forward.

But during review, something doesn’t sit right.

Questions start coming back:

- “How did we land on this root cause?”

- “What evidence supports this conclusion?”

- “Why wasn’t this path explored?”

Now the pressure increases.

And the person who owns the document can’t reconstruct the logic.

Because they didn’t build it.

They reviewed it.

That’s the moment.

That’s the gap.

That’s where COPQ starts compounding.

The COPQ Nobody Models

We talk about Cost of Poor Quality in terms of:

- Batch loss

- Deviation backlog

- Delayed disposition

- Lost capacity

But there’s a layer underneath all of that.

Weak thinking.

Because weak thinking creates:

- Poor investigations

- Extended review cycles

- Rework

- Repeated failure

That’s the Hidden Factory.

And AI—used incorrectly—doesn’t reduce it.

It hides it.

Until it gets expensive.

Human-in-the-Loop Is Breaking

“Human-in-the-loop” only works if the human is still capable.

Capable of:

- Building the logic themselves

- Challenging the output

- Rewriting it from scratch if needed

If they can’t do that, they’re approving something they don’t fully understand.

That’s not oversight and governance.

That’s exposure with a signature.

This Is How Capability Erodes

It doesn’t happen in a single moment.

It happens in small trades:

- “Let me just get a first draft…”

- “This is faster…”

- “I’ll clean it up…”

Until eventually, starting from a blank page feels harder than reviewing something pre-built.

That’s the shift.

That’s when you’ve stopped writing.

When It Actually Matters

Most of the time, this goes unnoticed.

Because most of the time, the stakes are manageable.

But GMP environments don’t fail under normal conditions.

They fail under pressure.

- Inspection

- Critical deviation

- Batch failure

- Health Authority scrutiny

In those moments, there’s no time to rely on assistance.

You either understand it—or you don’t.

You either can explain it—or you can’t.

You either can defend it—or you can’t.

That’s when the question becomes real.

Can you write the ship?

Where GMPWit Fits (And Where It Doesn’t)

There’s an important distinction here.

AI isn’t the problem.

Unstructured use of AI is.

Tools like GMPWit are built differently.

Not to replace thinking—but to structure it.

Not to generate answers—but to guide how answers are built.

That distinction matters.

Because the goal isn’t to remove the blank page.

It’s to make sure the person facing it knows how to think through it.

If the tool is doing the thinking for you, you’re weaker because of it.

If the tool is forcing you to think better, you’re stronger because of it.

That’s the line.

AI Doesn’t Fix This

Digital doesn’t fix broken execution. It exposes it.

We’ve said this before.

Digital doesn’t fix broken execution.

AI is no different.

It amplifies what’s already there.

- Strong thinking → faster execution

- Weak thinking → faster failure

So the question isn’t whether to use AI.

It’s whether your organization is strong enough to use it without degrading.

A Simple Test

Take the tool away.

No prompts. No drafts. No assist.

Can you:

- Write a deviation from scratch?

- Build a defensible root cause?

- Respond to a Health Authority clearly and directly?

If not, that’s not a training gap.

That’s a capability risk.

The Reality

At sea, if you can’t right the ship, you lose stability.

But if you can’t write the ship—you lose control entirely.

Not because AI failed.

But because you handed it something you were supposed to retain.

AI will keep getting better.

That’s not the variable.

You are.

So the real question is simple.

Are you getting sharper?

Or just getting faster?

The real risk isn’t AI hallucination—it’s human atrophy.

Next Steps

If this resonates, don’t start with tools.

Start with understanding where your cost actually sits.

→ Run the COPQ Calculator

→ Read the Whitepaper: Artificial Intelligence in GxP Environments

→ Join the GMPWit waitlist to be part of a more structured approach to AI—one that strengthens thinking, not replaces it

Because before you scale anything—AI included—you need to know whether you’re scaling strength… or scaling weakness.

Tags

Related Posts

Artificial Intelligence in GxP Environments

Artificial Intelligence (AI) in GxP environments is not a decision-making system—it is structured decision support aligned with GAMP 5. Most organizations get this wrong, positioning AI in ways that introduce unnecessary compliance risk. This whitepaper defines the correct framework—how to apply AI with discipline, maintain human ownership of decisions, and align with regulatory expectations without increasing validation burden.

Digital Doesn’t Fix Broken Execution

Pharmaceutical companies continue to invest heavily in digital transformation, yet operational outcomes remain unchanged. This article explains why visibility alone doesn’t improve execution—and how governance and discipline must come first.

Exposing the Hidden Factory: How COPQ Reveals Capacity Constraints

The Hidden Factory represents unmeasured capacity consumed by internal failure activities such as deviation investigations, production rework, and documentation errors. While these activities are tracked operationally, their economic impact is rarely quantified. Applying the Cost of Quality framework translates this consumption into financial terms, providing a basis for capacity recovery and risk-informed prioritization aligned with ICH Q9 and Q10.